One of the central challenges of introducing artificial intelligence (AI) to any industry is that its promise and peril are so entwined. The tools that automate routine tasks, such as scheduling appointments or writing code, can reduce drudgery and boost productivity but also eliminate jobs. Algorithms that interpret images and enhance diagnostic accuracy can erode clinicians’ skills. Meanwhile, the data centers powering breakthroughs in disease treatment deplete fresh water and drive up electricity use, causing environmental harm particularly to the communities nearest them.

This dichotomy is mirrored in divisions between people who believe AI can make any industry faster, cheaper, and more efficient, and those who focus on AI’s potential to discriminate and amplify harm. In sectors where jobs can be easily automated, the rosier view of AI tends to prevail. But in health care — where the consequences of AI’s missteps are high, and human expertise can’t be readily replaced — organizations are compelled to strike a balance between innovation and caution.

This issue of Transforming Care looks at how employees of health care systems of different sizes are working to make AI useful in solving business problems and improving care, while also mitigating the risk of harm to patients and institutional reputations. We explore how they distinguish promising ideas from wishful thinking and prioritize use cases when the list of health care’s intractable problems seems so long. They also share how they tackle common impediments to AI’s advancement — by earning the trust of clinicians and by ensuring that what works in one setting will work in a new environment with different patients, different staff, and different financial incentives.

“The variation in environments is part of the reason we haven’t seen an AI-enabled change in a treatment paradigm that dramatically improves outcomes across the U.S.,” says Mark Sendak, MD, MPP, the former population health and data science lead at the Duke Institute for Health Innovation, which supports clinicians in leveraging machine learning and data science to solve clinical problems. “AI hasn’t had its penicillin moment because the context of the implementation really shapes the outcome.”

Indeed, as the examples that follow show, AI’s current value may rest not only in achieving a particular outcome but also in the process of adopting or adapting to it. That’s because AI’s usefulness hinges on testing and refinement in real-world settings. And in high-stakes environments like hospitals and health clinics, that requires new levels of vigilance as well as collaboration across disciplines to avoid harm.

Challenge No. 1: Deciding Where to Invest

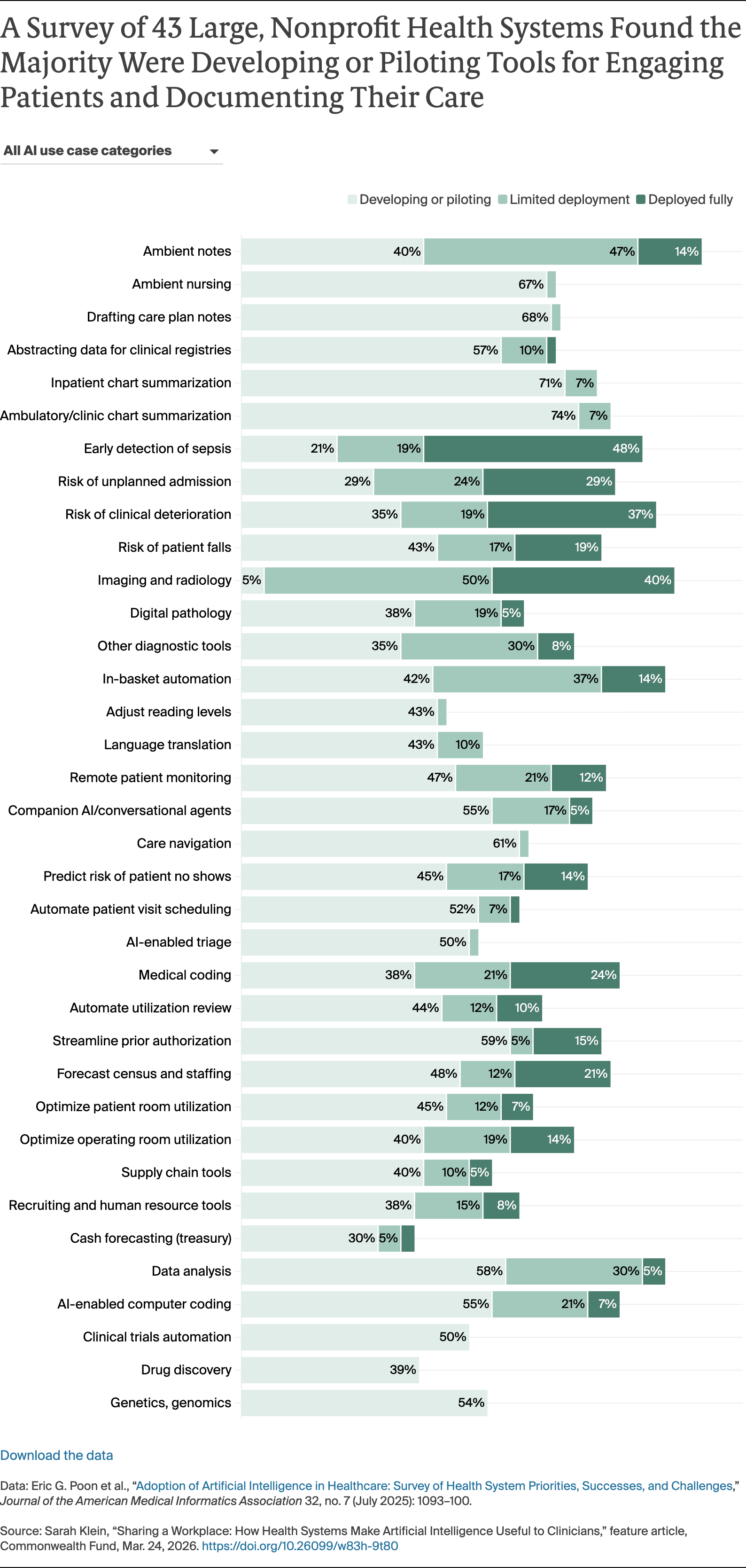

To be clear, artificial intelligence is not new to health care. Over the past two decades, health systems have used predictive AI models to sift through mountains of data from electronic health records (EHRs), radiology images, and billing records to generate new insights about diseases and the efficiency of health system operations. These tools, which rely on large datasets to discern patterns that can be used to infer future outcomes, have enhanced clinical decision-making by, for example, predicting an individual patient’s course of disease, their odds of developing surgical complications, or response to immunotherapy. They also have helped increase efficiency through forecasting the need for staff, beds, and supplies based on disease outbreaks and seasonal variation. And they have closed gaps in care that contribute to poor health outcomes, such as by identifying and prioritizing patients who need follow-up care.

As the mathematical models that drive predictive forms of AI have become more sophisticated, and the data on which they rely more robust and less expensive to manipulate, such models have begun to outperform humans. In small-scale deployments, health systems have shown these tools can detect signs of pancreatic cancer and sepsis earlier than humans do, though challenges to realizing their potential abound.

Less dramatic, but of enormous value to clinician productivity and morale, are the newer generative AI tools that produce text and images in response to prompts. When health systems roll these out in the form of ambient scribes that capture and summarize critical details of medical visits, or as tools that take a first pass at filling out prior authorization requests, they can lessen after-hours work known as “pajama time,” a key contributor to burnout. “I’m hearing people say things like, ‘I’ve got my life back.’ When was the last time you heard someone say that about a piece of technology?” says Armando Bedoya, MD, MMCi, the chief analytics and medical informatics officer at Duke University Health System.

The proliferation of potential use cases and vendors pitching off-the-shelf products requires health systems to make choices about where to invest scarce resources for evaluation and monitoring. Without a deliberative process that weighs the benefit to patients and doctors against AI’s impact on the bottom line, the latter tends to win out, says Jono Hoogerbrug, MD, a primary care physician in Auckland, New Zealand, and a former Commonwealth Fund Harkness Fellow in Health Care Policy and Practice who spent a year at Stanford University studying organizational behavior surrounding AI adoption. “A lot of the decision-making sits at the C-suite level, where the value proposition is often based on return-on-investment,” he says. “That’s important to financial sustainability, but it can lead to a misalignment between an institution’s financial interests and the needs of patients or clinicians.”

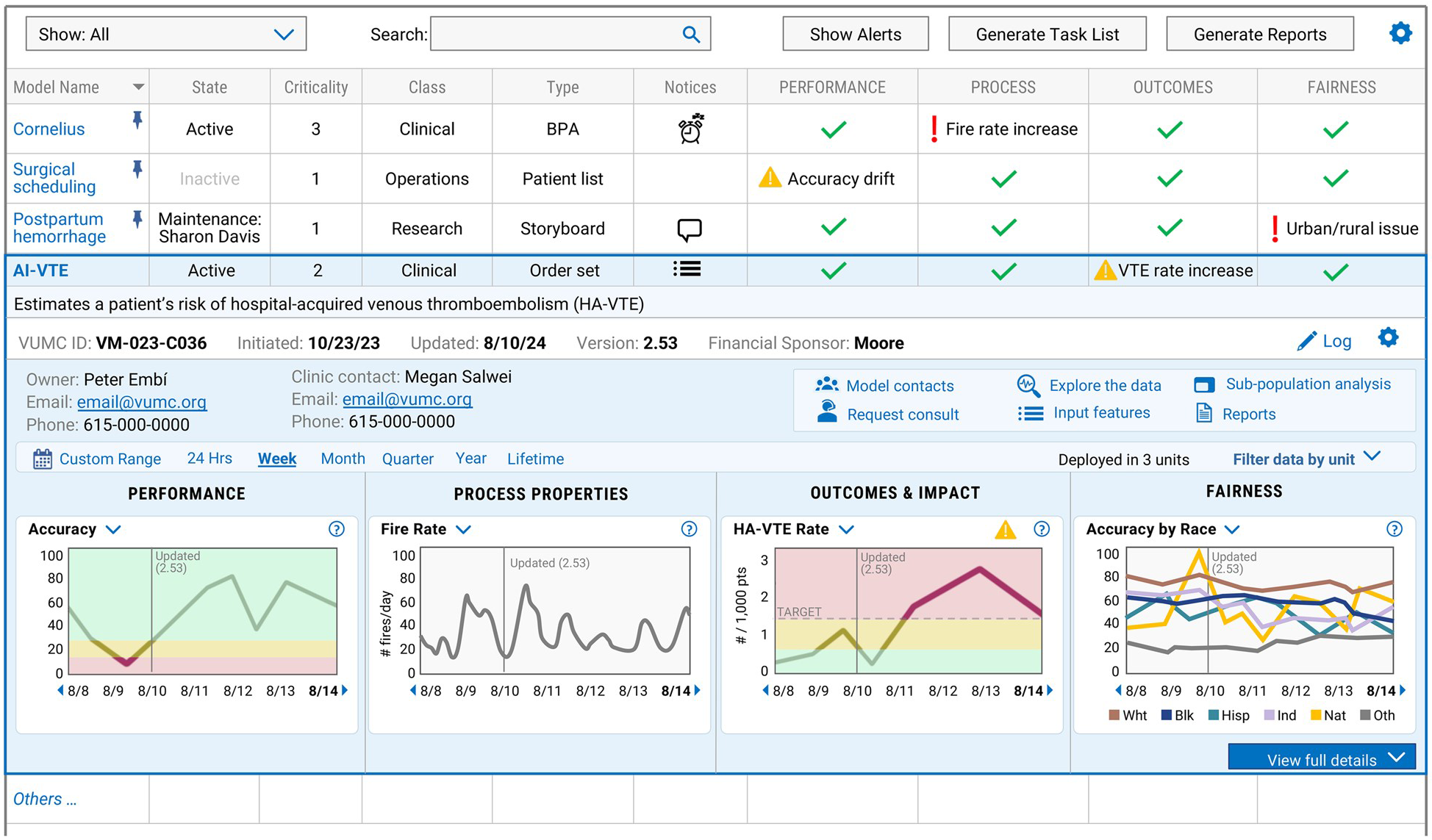

At large health systems like Duke and the Mayo Clinic, the decision to adopt a particular AI model has been delegated to local hospitals, departments, and specialties, while aspects of vetting and evaluating use cases are centralized at the enterprise level. Like Vanderbilt Health, these health systems have established structured processes and multidisciplinary teams to guide staff in assessing whether a particular model works as intended. The Duke Institute for Health Innovation and Vanderbilt’s ADVANCE (AI Discovery and Vigilance to Accelerate Innovation and Clinical Excellence) Center bring faculty with expertise in computer science, data informatics, ethics, and anthropology together with health system administrators and clinicians to identify which models suggested by staff or outside vendors may require additional oversight to mitigate clinical, operational, or regulatory risks. The Mayo Clinic embeds aspects of the validation process in the Mayo Clinic Platform, a division that partners with AI start-ups and more established companies to test and refine models using de-identified patient data, with a goal of scaling promising solutions worldwide.

In guiding staff, all three institutions ask similar questions: How big is the problem the tool seeks to solve? Is there a simpler, cheaper solution already available on the market? If not, what’s the source and quality of data on which the model will rely? And what measures exist, or would need to be created, to test its effectiveness and monitor its impact over time?

Since it was launched in 2020, the Mayo Clinic Platform has focused largely on exploring innovations it hopes will improve outcomes for large numbers of patients, increase efficiency, or improve workforce retention. Vendors that aren’t “values aligned” — such as those developing algorithms that could be used to delay or deny care — are filtered out. Meanwhile, solutions that address pain points for clinicians by, for example, distilling critical details from thousands of pages of medical records for second-opinion consultations, often advance. Some projects, like one that produced 3D avatars of Mayo Clinic clinicians, target all three priorities at once. The avatars may soon answer basic patient questions, such as “How long do I need to wear this cast?” after hours. Trained on prior video visits, the avatars mirror an individual’s speech patterns and behaviors so closely that they are nearly indistinguishable from their human counterparts, says John Halamka, MD, MS, the Dwight and Dian Diercks President of the Mayo Clinic Platform. “The only thing I’ve noticed is the avatars gesture too much,” he says.

Challenge No. 2: Creating a More Accurate Representation of Patients

Unlike digital apps and social media platforms that enable real-time tracking of people’s movements, reading tastes, and purchasing habits, the data captured in EHRs present an imperfect view of patients and their needs. Even when they span decades, EHR data are based on a limited set of observations, recorded in idiosyncratic ways that often reflect individual and societal biases.

Vanderbilt researchers, for instance, found providers in hospitals in Chicago and Nashville spent more time reviewing medical records and updating clinical notes for white patients and people who pay cash than others, a practice that undermines the reliability of AI tools that depend on documentation, such as early-warning systems that scan medical records and flag signs of clinical deterioration in hospitalized patients. Data on patients who face economic or logistical barriers in accessing care are also more limited or missing altogether.

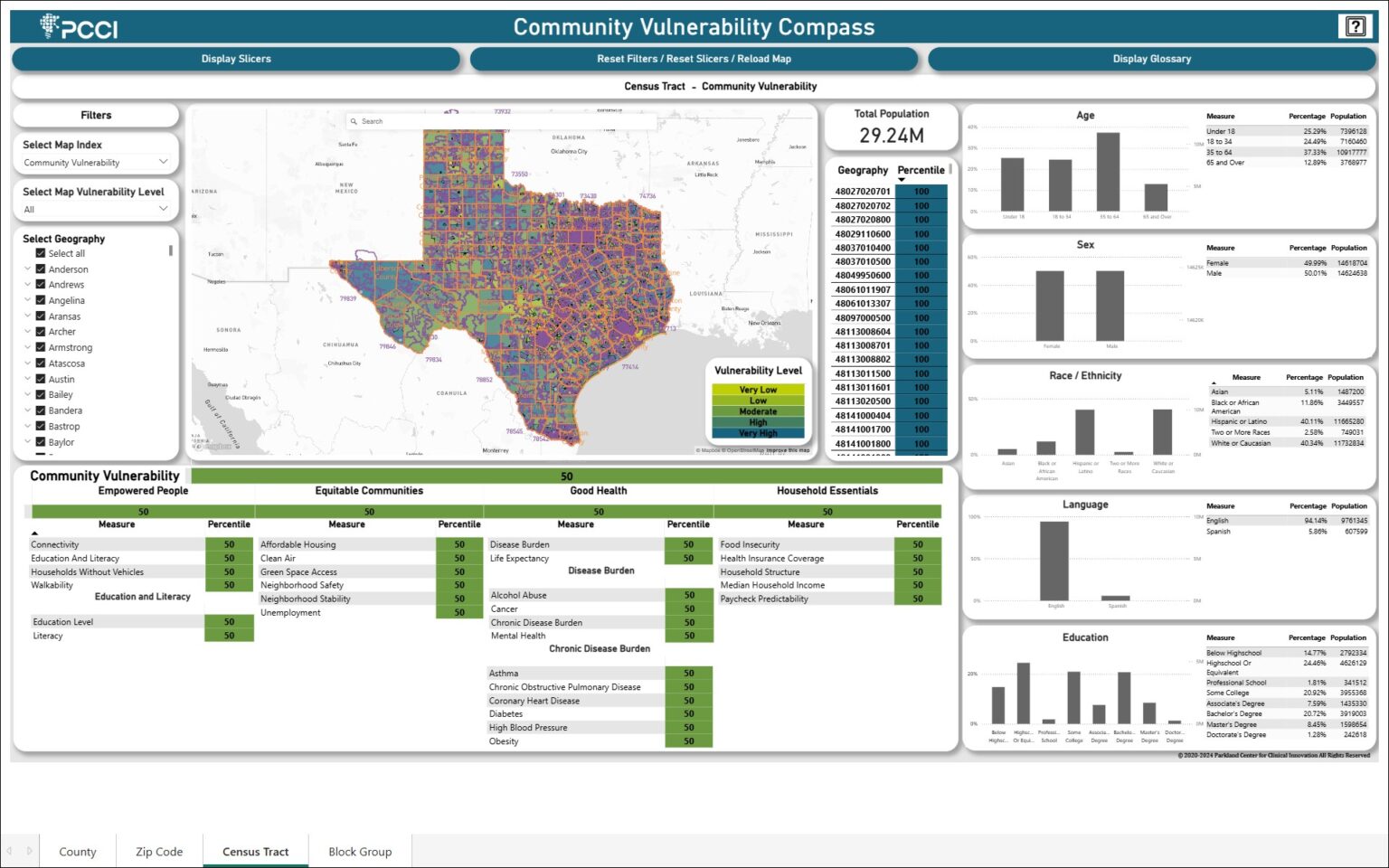

If these gaps aren’t accounted for, AI models can exacerbate disparities by drawing more attention and resources to patients who already have access to medical care and neglecting sicker people who don’t. Generalizing findings from AI models that were trained on data on patients in just a handful of states can also be problematic if the social, behavioral, and ethnic characteristics of patients in one region differ substantially from another. To ensure AI tools learn from local data, PCCI, a nonprofit research firm that was spun out of Parkland Health and Hospital System in Dallas, built a custom dataset that it’s using to identify unique risk factors that low-income patients face and the ways neighborhood-level conditions affect their health outcomes and access to care. PCCI’s cloud-based platform combines de-identified clinical data from Parkland and more than 100 other hospitals and health systems in the Dallas area. Using geocoding, the records are linked to publicly reported information on local crime rates, air quality, and accessibility of transportation, grocery stores, and green spaces, among some 26 nonmedical drivers of health.

Applying AI models to the enhanced dataset enabled PCCI to document a correlation between car ownership and access to prenatal care, suggesting telemedicine and ride-sharing programs could be leveraged to reduce preterm births. The nonprofit also found children living in communities with higher smoking rates are more likely to experience asthma exacerbations, as were children living in areas with high levels of food insecurity, suggesting families may prioritize food needs over medical care to stretch dwindling resources. “Interventions addressing food insecurity such as community farms or adequate SNAP benefits might free up family resources to support the care of vulnerable children with asthma,” says Yolande Pengetnze, MD, MS, a pediatrician and vice president of clinical leadership at PCCI.

AI allows us to capture small differences in risk factors that when added together become a substantial risk factor for poor outcomes. You can only visualize that using a model that can measure small increments.

PCCI is using the data to map census blocks where the disease burden of diabetes, hypertension, and asthma is higher, with a goal of highlighting interventions that could improve outcomes for a single patient or a community. “In one area, patients might lack access to a pharmacy. In another, there may be a shortage of smoking cessation programs,” Pengetnze says.